The Algorithm Will Eat Democracy Unless We Revive the Fairness Doctrin

In 1927, we regulated radio because one voice could reach thousands. Today, AI-powered lies reach billions in seconds, and we're doing nothing.

Paul Zurav

1/21/20266 min read

In January 2024, an 82-year-old retiree named Steve Beauchamp watched a video of Elon Musk promoting a cryptocurrency investment. "I mean, the picture of him, it was him," Steve told reporters. He drained his retirement fund. All $690,000 of it.

The video wasn't real. It was a deepfake. Steve is not alone.

A finance worker in Hong Kong joined a video conference call with her company's CFO and several colleagues to discuss an urgent acquisition. She authorized 15 transfers totaling $25 million. Every person on that call except her was AI-generated. A woman in Ontario saw Elon Musk on Facebook promoting an investment opportunity. She took out a second mortgage, borrowed from family and friends, maxed out her credit cards. Gone. $1.7 million. All of it.

These aren't isolated incidents. They're the new normal.

The Numbers Don't Lie (But AI Does)

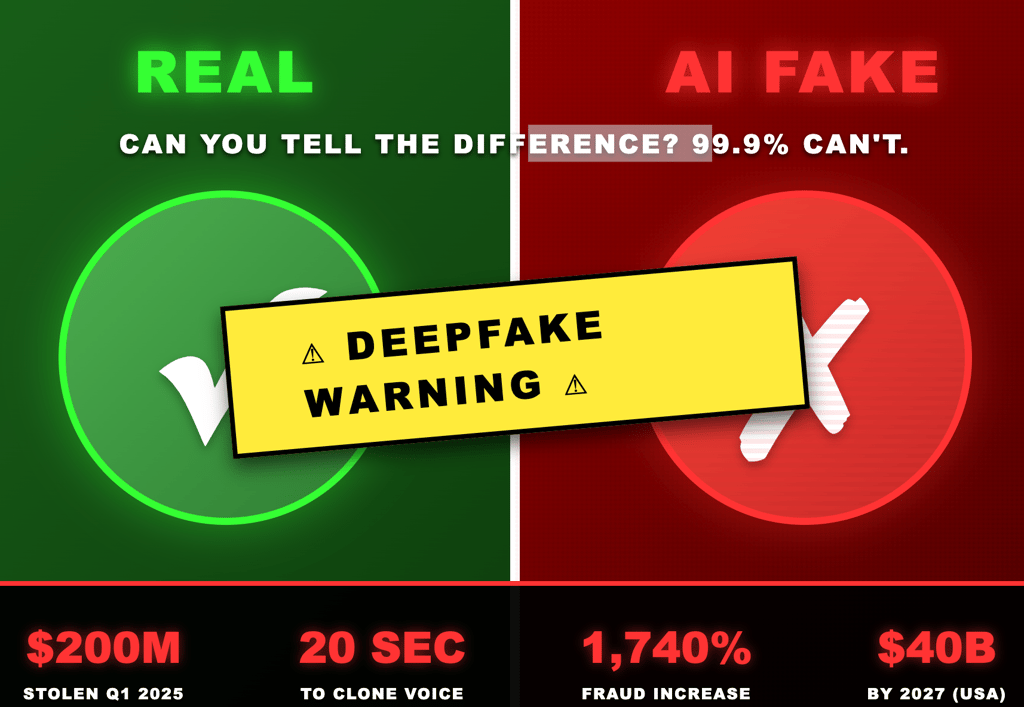

Deepfake fraud caused $200 million in losses in the first quarter of 2025 alone. That's just Q1. Just what was reported. Just what we know about.

The real number? Deloitte projects AI-enabled fraud will hit $40 billion in the United States by 2027. That's a 32% annual growth rate. North America saw a 1,740% surge in deepfake fraud cases between 2022 and 2023. Not a typo. One thousand, seven hundred, forty percent.

Here's the terrifying part: creating a convincing deepfake now requires 20 seconds of audio and 45 minutes of your time. The tools are free. The Biden robocall that hit New Hampshire voters cost $1 to create and took less than 20 minutes to make.

Deepfake-as-a-Service platforms went mainstream in 2025. You don't need technical skills anymore. You don't need expensive software. You need internet access and bad intentions.

We've Been Here Before

In 1927, radio was the revolutionary new technology that could reach millions of people with unchecked messaging. The Radio Act of 1927 was our first attempt to regulate it. By 1949, that framework evolved into the Fairness Doctrine, which required broadcasters to present controversial issues honestly and cover all sides.

The logic was simple: when technology gives someone the power to influence millions, there need to be guardrails. The truth matters. Balance matters. Accountability matters.

In 1987, the FCC eliminated the Fairness Doctrine. When Congress tried to codify it into law, Ronald Reagan vetoed it. The argument? First Amendment rights. Free speech.

But here's the thing about free speech: it doesn't give you the right to yell fire in a crowded theater when there isn't one. And it sure as hell doesn't give you the right to manufacture a fire out of thin air using AI and broadcast it to billions of people before anyone can verify it's fake.

Fast Forward to Right Now

We handed control of reality-shaping technologies to Mark Zuckerberg, Rupert Murdoch, and Elon Musk with zero accountability. Fox "News" and social media platforms spread lies that led directly to the January 6th insurrection. Climate change denial, pushed by the same apparatus, threatens human extinction.

Now add AI to that toxic mix.

Elon Musk is the most commonly impersonated figure in deepfake scams, according to research firm Sensity. His face, his voice, his credibility, weaponized at scale to steal people's life savings. The scammers who defrauded victims through fake Elon Musk videos received at least $5 million between March 2024 and January 2025, and that's just one operation we can track.

Romance scammers now use deepfake video calls to "prove" their identity. They smile, nod, react in real time. Victims report having video chats with people who looked completely real but were AI-generated from stolen photos. One soap opera actor was deepfaked to scam an LA victim out of her life savings.

Corporate executives are being impersonated in video conferences to authorize fraudulent wire transfers. Ferrari's CEO had his voice cloned so perfectly that it replicated his southern Italian accent. WPP's CEO was targeted by scammers who cloned his voice for a fake Teams call.

The Detection Problem

Here's the nightmare: 70% of people doubt their ability to distinguish real voices from fake ones. They're right to doubt. Research shows humans cannot consistently identify AI-generated voices. We often perceive them as identical to real people.

One study found that only 0.1% of participants could correctly identify all fake and real media shown to them. Not 1%. Not 10%. Zero point one percent.

Even experts struggle. The effectiveness of defensive AI detection tools drops by 45-50% when used against real-world deepfakes outside controlled lab conditions.

Translation: We've reached a point where seeing is no longer believing, and we have no reliable way to tell the difference.

But Elections Were Fine, Right?

The 2024 elections didn't collapse into deepfake chaos. Meta reported that less than 1% of fact-checked misinformation during the 2024 election cycles was AI content. Traditional "cheap fakes" (edited videos, not AI) were used seven times more often than deepfakes.

So we're safe? Not even close.

We got lucky. The technology wasn't quite there yet, and people were watching for it. But every month, the tools get better, cheaper, more accessible. The 2024 elections were a warning shot. The real attack hasn't started yet.

And besides, focusing on elections misses the point. Deepfakes aren't primarily an election problem. They're a reality problem.

The Liar's Dividend

There's a concept called "the liar's dividend." It works like this: once deepfakes become convincing enough, anyone can dismiss real evidence as fake. Caught on camera doing something terrible? Just claim it's AI-generated. Your supporters will believe you because they've seen how good deepfakes can be.

Truth and lies become indistinguishable. Evidence becomes meaningless. Reality itself becomes a matter of partisan interpretation.

When that happens, democracy doesn't just get harder. It becomes impossible.

The "Free Speech" Dodge

I already hear the objection: "But regulating this violates free speech!"

No. It doesn't.

The First Amendment protects your right to speak. It does not protect your right to impersonate someone else using AI to defraud people. It does not protect your right to manufacture synthetic evidence. It does not protect your right to operate platforms that algorithmically amplify lies for profit while claiming you're just a neutral conduit.

In 1927, we recognized that radio required regulation because one voice could reach thousands. Today, one AI-generated video can reach billions in seconds, appear in millions of social media feeds, get embedded in thousands of news aggregators, and become "truth" before anyone can verify it's false.

If radio needed the Fairness Doctrine, what the hell do you call what we need now?

What Needs to Happen

We need a modern Fairness Doctrine built for the AI age. Not the 1949 version, the 2026 version. Here's what that looks like:

1. Platform Accountability: Social media platforms and AI tools cannot hide behind Section 230 when they algorithmically amplify content they know or should know is fraudulent. You want algorithmic feeds? Fine. You're responsible for what your algorithm promotes.

2. Mandatory Authentication: AI-generated content must be labeled as such. Period. If you create synthetic media, watermark it. If your platform hosts it, detect it. If you fail to do either, you're liable for the harm it causes.

3. Rapid Response Systems: When deepfakes appear, platforms must have systems to flag, investigate, and remove them within hours, not days or weeks. Lives and money are being lost while "review processes" grind along.

4. Criminal Penalties: Creating deepfakes to defraud, harass, or spread non-consensual intimate imagery is already illegal in some states. Make it federal. Make the penalties serious enough to matter.

5. Corporate Liability: If your company gets defrauded because you failed to implement basic verification protocols (callback procedures, multi-factor authentication, secondary confirmation channels), that's on you. But if platforms enabled the fraud by failing to detect obvious deepfakes, that's on them too.

The Two Biggest Problems Humanity Faces

We're staring down two existential threats. If we don't eliminate the threats to our democracy, the world will never be the same. If we don't reverse climate change, we're dooming future generations to extinction.

Both problems require us to distinguish truth from lies. Both require functioning information systems. Both require the ability to trust evidence.

AI-powered deepfakes destroy all three.

The Bottom Line

In 1927, we regulated radio because one technology could reach thousands with unchecked messaging. We recognized that with great communicative power comes great responsibility.

It's 2026. AI can generate fake videos, fake voices, and fake evidence so convincing that even experts can't reliably detect them. This content can reach billions of people in seconds. It's being used right now to steal billions of dollars, destroy reputations, undermine elections, and erode the very concept of truth.

If we needed the Fairness Doctrine when radio could reach thousands, we desperately need it now when AI-enhanced lies can reach billions before we even realize we've been fooled.

The technology isn't going away. It's getting better, cheaper, more accessible every single day. The question isn't whether we'll regulate it. The question is whether we'll do it before or after democracy collapses under the weight of synthetic reality.

Your move, America.

Home | My Books| About Paul Zurav | Taking a Closer Look | Coaching | Privacy Policy | Contact

Copyright: © 2026 CTEK LLC / Paul Zurav